|

5/6/2021 0 Comments Hmm Learn Python

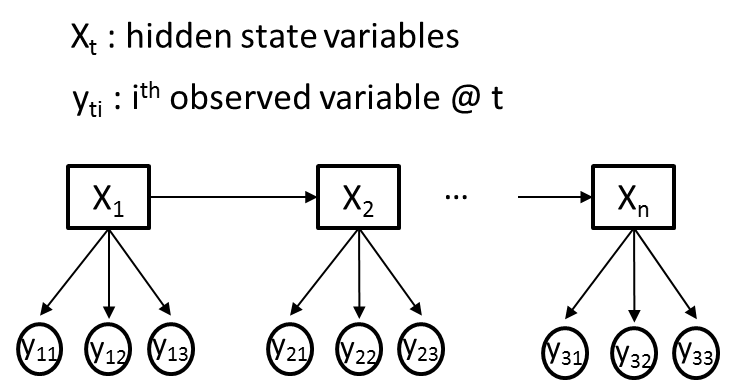

Terminology in HMM The term hidden refers to the first ord e r Markov process behind the observation.Markov process is shown by the interaction between Rainy and Sunny in the below diagram and each of these are HIDDEN STATES.OBSERVATIONS are known data and refers to Walk, Shop, and Clean in the above diagram.

In machine learning sense, observation is our training data, and the number of hidden states is our hyper parameter for our model. T dont have any observation yet, N 2, M 3, Q Rainy, Sunny, V Walk, Shop, Clean State transition probabilities are the arrows pointing to each hidden state. Observation probability matrix are the blue and red arrows pointing to each observations from each hidden state. The matrix explains what the probability is from going to one state to another, or going from one state to an observation.

Hmm Learn Python Full Model WithFull model with known state transition probabilities, observation probability matrix, and initial state distribution is marked as, How can we build the above model in Python In the above case, emissions are discrete Walk, Shop, Clean. MultinomialHMM from the hmmlearn library is used for the above model. Problem 3 in Python Speech recognition with Audio File: Predict these words apple, banana, kiwi, lime, orange, peach, pineapple Amplitude can be used as the OBSERVATION for HMM, but feature engineering will give us more performance. Function stft and peakfind generates feature for audio signal. Kyle Kastner built HMM class that takes in 3d arrays, Im using hmmlearn which only allows 2d arrays. This is why Im reducing the features generated by Kyle Kastner as Xtest.mean(axis2). Going through this modeling took a lot of time to understand. I had the impression that the target variable needs to be the observation. Classification is done by building HMM for each class and compare the output by calculating the logprob for your input. Mathematical Solution to Problem 1: Forward Algorithm Alpha pass is the probability of OBSERVATION and STATE sequence given model. Alpha pass at time (t) 0, initial state distribution to i and from there to first observation O0.

Mathematical Solution to Problem 2: Backward Algorithm Given model and observation, probability of being at state qi at time t. Mathematical Solution to Problem 3: Forward-Backward Algorithm Probability of from state qi to qj at time t with given model and observation Sum of all transition probability from i to j. Transition and emission probability matrix are estimated with di-gamma. Hmm Learn Python Series Data ScienceIterate if probability for P(Omodel) increases Written by Eugine Kang Follow 1K 3 1K 1K 3 Machine Learning Timeseries Data Science Python Speech Recognition More from Eugine Kang Follow More From Medium Nave Bayes Tutorial using MNIST Dataset Arnabp in Data Sensitive Building a Product Catalog: eBays 2nd Annual University Machine Learning Competition Senthil Padmanabhan in eBayTech Can Machine Learning Solve Problems in Education Kury.us Dave Kearney in The Startup PrecisionRecall Tradeoff Ryan Tabeshi in The Startup Image Classification with TensorFlow Tim Busfield in Analytics Vidhya Can we locate dams from space Charlotte Weil Sentiment analysis: Machine Learning Approach. Safdar Mirza The Many Flavors of Gradient Boosting Algorithms Pierre Louis Saint in data from the trenches About Help Legal Get the Medium app.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

Search by typing & pressing enter

RSS Feed

RSS Feed